How to Read Broadcast Variables in Executors Pyspark

During my semester project, I was faced with the chore of processing a large information set (6 TB) consisting of all the revisions in the English Wikipedia till October 2016. We chose Apache Spark every bit our cluster-calculating framework, and hence I ended upwards spending a lot of time working with it. In this post, I want to share some of the lessons I learned throughout the utilise of PySpark, Spark's Python API.

Spark is a framework to build and run distributed data manipulation algorithms, designed to exist faster, easier and to support more types of computations than Hadoop MapReduce. In fact, Spark is known for beingness able to keep large working datasets in retentivity between jobs, hence providing a performance boost that is up to 100 times faster than Hadoop.

Although it is written in Scala, Spark exposes the Spark programming model to Java, Scala, Python and R. While I had the opportunity to develop some small Spark applications in Scala in a previous class, this was the first time I had to handle this amount of data and nosotros agreed to utilize the PySpark API, in Python, equally Python is now get the lingua franca for data science applications. Moreover, using the Python API has a negligible performance overhead compared to the Scala one.

PySpark

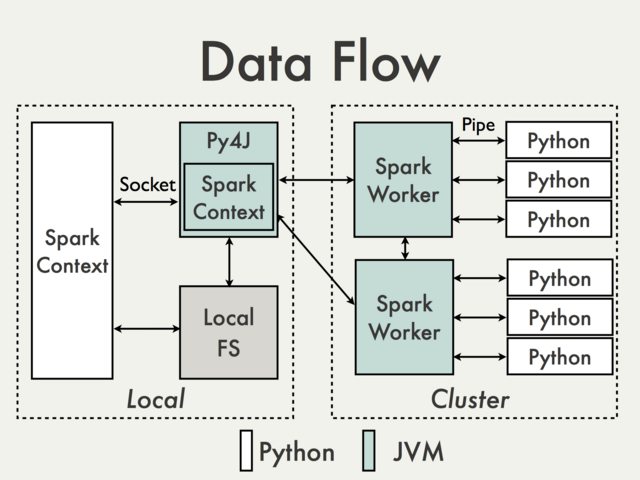

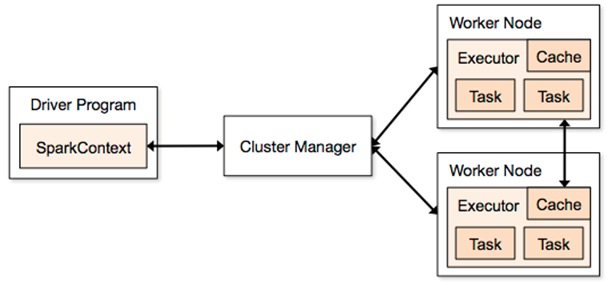

PySpark is really built on superlative of Spark's Java API. In the Python driver plan, SparkContext uses Py4J to launch a JVM which loads a JavaSparkContext that communicates with the Spark executors beyond the cluster. Python API calls to the SparkContext object are so translated into Java API calls to the JavaSparkContext, resulting in information being processed in Python and cached/shuffled in the JVM.

RDD (Resilient Distributed Datasets) is defined in Spark Core, and it represents a collection of items distributed across the cluster that can be manipulated in parallel. PySpark uses PySpark RDDs which are just RDDs of Python objects, such every bit lists, that might shop objects with different types. RDD transformations in Python are then mapped to transformations on PythonRDD objects in Java.

Spark SQL and DataFrames

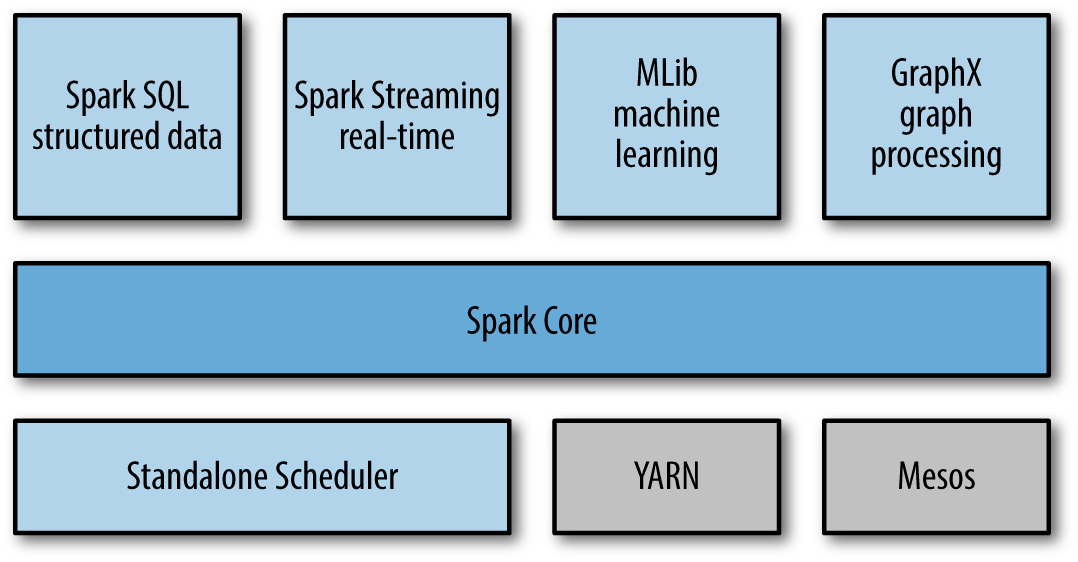

At its core, Spark is a computational engine that is responsible for scheduling, distributing, and monitoring applications consisting of many computational tasks on a calculating cluster. In add-on, Spark too supports a rich set of higher-level tools including Spark SQL for SQL and structured data processing, MLlib for machine learning, GraphX for graph processing, and Spark Streaming.

Whenever analyzing (semi-)structured data with Spark, it is strongly suggested to brand use of Spark SQL: The interfaces provided past Spark SQL enrich Spark with more information nigh the structure of both the data and the computation being performed, and this actress information is also used to perform further optimizations. At that place are several means to interact with Spark SQL including SQL, the DataFrames API and the Datasets API. In my project, I but employed the DataFrame API as the starting data set is available in this format.

A DataFrame is a distributed collection of data (a drove of rows) organized into named columns. Information technology is based on the data frame concept in R or in Pandas, and it is similar to a table in relational database or an Excel sheet with column headers. DataFrames can be constructed from a wide array of sources such as: structured data files, tables in Hive, external databases, or existing RDDs; and they as well share some common characteristics with RDD: they are immutable, lazy and distributed in nature.

Implementation all-time practices

Broadcast variables. When you accept a large variable to be shared across the nodes, use a broadcast variable to reduce the advice toll. If yous don't, this same variable will exist sent separately for each parallel performance. Also, the default variable passing machinery is optimized for small variables and can be irksome when the variable is large. Broadcast variables let the programmer to keep a read-only variable cached, in deserialized form, on each machine rather than shipping a copy of information technology with tasks. The circulate of variable five tin exist created by bV = sc.broadcast(v). And so value of this broadcast variable can be accessed via bV.value.

Parquet and Spark. It is well-known that columnar storage saves both time and space when information technology comes to big information processing. In item, Parquet is shown to boost Spark SQL operation by 10x on average compared to using text. Spark SQL provides support for both reading and writing parquet files that automatically capture the schema of the original information, so in that location is really no reason non to use Parquet when employing Spark SQL. Saving the df DataFrame as Parquet files is as easy as writing df.write.parquet(outputDir). This creates outputDir directory and stores, under information technology, all the part files created past the reducers as parquet files.

Overwrite save way in a cluster. When saving a DataFrame to a data source, by default, Spark throws an exception if information already exists. Nonetheless, It is possible to explicitly specify the behavior of the save operation when information already exists. Among the available options, overwrite plays an important role when running on a cluster. In fact, it allows to successfully consummate a job even when a node fails while storing data into disk, allowing some other node to overwrite the partial results saved past the failed 1. For instance, the df DataFrame can be saved as Parquet files using the overwrite relieve style by df.write.manner('overwrite').parquet(outputDir).

Clean code vs. operation. When processing a large amount of data, you lot may need to trade writing clean code for a performance boost. For example, I once reported that filtering a specific assortment by creating a new one via listing comprehension (ane line) earlier processing it was an order of magnitude slower than writing a longer for loop containing the required conditional statements forth with the processing steps. This is because a new array was beingness created and additional fourth dimension to classify it is required. While this might seem a negligible quantity, when the book of data is huge, this can make the difference between a feasible and an unfeasible operation.

Process data in batches. While I was initially required to process our original DataFrame in batches due to the cluster configurations, this actually resulted in a very functional method to process data in later stages too. The partial results of each batch tin then but exist merged together and this approach can be very helpful every bit (i) some nodes might go downwardly and pb your chore to fail, forcing you lot to rerun it on the entire dataset; and (ii) this might exist the but methodology to crisis your data if your application is memory-bounded. Moreover, merging all the partial DataFrame I obtained after each stage was an extremely fast and cheap operation, marginalizing the additional overhead.

Run multiple regexs in a cluster. This last point might cause you to jolt, and I was surprised when I found it out. When y'all need to match multiple regex patterns, you commonly piping them (| operator) into a single ane and become through a text merely once. In fact, this is usually faster as reading from disk is expensive. For instance, in a sample data set I had in my laptop, this resulted in a 3-infinitesimal faster execution than running each regex separately (seven vs. 10 minutes). All the same, when I ran this experiment on a larger drove in a cluster, I noticed that running each regex separately was slightly faster than group them together. And so, you can skip all the tests needed to ensure that the different regexs practise not interfere with each other when grouped together and directly develop your application using multiple regexs.

Tuning your Spark application

I spent nearly of my time in tuning the amount of resources required to successfully complete dissimilar jobs. While request for an insufficient amount of retentiveness might lead a chore to fail, requesting unnecessary resource comes with a cost that might be significant for long runs. In general, information technology is of import to understand how to properly determine the amount of resources required to run a given job.

The most relevant settings are:

-

--num-executors: number of executors requested -

--executor-cores: number of cores per executor requested -

--executor-memory: executor JVM heap size -

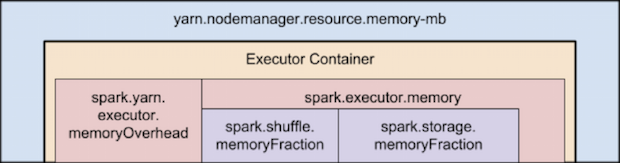

--conf spark.yarn.executor.memoryOverhead: determines full memory request (in MB) to YARN for each executor. Default: max(384, 0.07*spark.executor.retentivity) -

--driver-memoryand--driver-cores: resources for the application master

When using PySpark, information technology is noteworthy that Python is all off-heap memory and does not use the RAM reserved for heap. In fact, remember that PySpark starts both a Python process and a Java one. The Java process is what uses heap retentiveness, while the Python process uses off heap. So, you may demand to decrease the amount of heap memory specified via --executor-memory to increase the off-heap retention via spark.yarn.executor.memoryOverhead.

Likewise, running tiny executors (with a single cadre, for instance) throws abroad benefits that come from running multiple tasks in a single JVM. For instance, broadcast variables demand to be replicated once on each executor, and then many pocket-size executors will result in many more copies of the data.

The number of cores yous configure is another important cistron as it affects the number of concurrent tasks that can be run in memory at one time. This in turn affects the corporeality of execution retention being used by each tasks as information technology is shared between them. For example, with 12GB heap memory running eight tasks, each one roughly gets about 1.5GB.

And then, how do I melody my Spark application? My suggestion is to beginning with a per-core mindset and and so scale out. Consider, for example, the IC Hadoop cluster with 7 nodes, each equipped with 38 VCores and 238 GB of RAM.

- Start by running a single-core worker executor per node and endeavor to estimate the corporeality of memory information technology might exist needed by a unmarried core to process your information without failing.

- Once you have adamant the retentivity requirements of your awarding, you can increment the number of cores per executor to N while assigning each of them roughly North times the memory y'all established. As I said before, having more cores per executor allows to have some benefits as they run in a single JVM, but too many cores per executor might slow yous downward at writing time. Hence, this is a value that strongly depends on your awarding.

- You tin can then scale out in terms of number of executors with the constraints of the number of available cores and RAM per machine (respectively, up to 38 and 238GB in our case if your are the only user of the cluster). Moreover, recall that an additional container is used past the commuter.

Common errors and how to fix them

When developing your Spark application, y'all come up across very long stack traces whenever an exception is raised. Hence, information technology might exist a bit hard to discover the cause of your error and how to solve it, especially when this is due to resource allocation. Here are presented some of the nigh frequently errors I met during my semester projection and what I did to fix them.

-

… Consider boosting spark.yarn.executor.memoryOverhead. When this message is displayed, just request a larger amount of off heap retention via

--conf spark.yarn.executor.memoryOverhead=<N_MB>, where<N_MB>is the number of MB requested per executor. -

java.lang.OutOfMemoryError: Java heap infinitejava.lang.OutOfMemoryError: GC overhead limit exceededWhen either of these errors is thrown, consider boostingdriver-memoryand/orexecutor-retentivity. In particular, requesting a larger amount of the driver retention might seem counterintuitive in some context but tin actually solve the problem without requesting a larger amount of retention for each executor. -

coffee.lang.NullPointerExceptionWhen this exception is raised, the crusade might be in the cluster itself. I came beyond this expection when the service was downward in one of the cluster, and when there was no storage left in my output directory. -

Serialized results … is bigger than spark.driver.maxResultSize When this message is displayed, just prepare a larger maximum size for a variable that needs to be collected at the driver via

--conf spark.driver.maxResultSize=<N>G, where<N>is the number of GB requested.

Conclusions

Determining the correct amount of resources requested by a Spark application might take more than time than you expect. This postal service intends to give you some insights on how to reduce it by firstly looking at single-core executors and and then scaling out, as the memory assigned to each executor is, more or less, every bit dissever among its cores.

Other take-aways are the internal structure of PySpark and its off-heap retentivity requirements, which I was initially unaware of, and the lists of best practices and of common errors that sprouted during my semester-long research.

These were overall the tasks were I spent nigh of my time. There are different resources online that can help you get a improve understanding of what is going under the hood when you run a Spark application, but information is scattered among several sources and might take you a while to excerpt the piece you are looking for. Had I had this mail bachelor at the fourth dimension of my semester project, it would have saved me substantial amount of searching time, and I hope this can really be helpful to yous!

Source: https://dlab.epfl.ch/2017-09-30-what-i-learned-from-processing-big-data-with-spark/

0 Response to "How to Read Broadcast Variables in Executors Pyspark"

Post a Comment